Main Idea

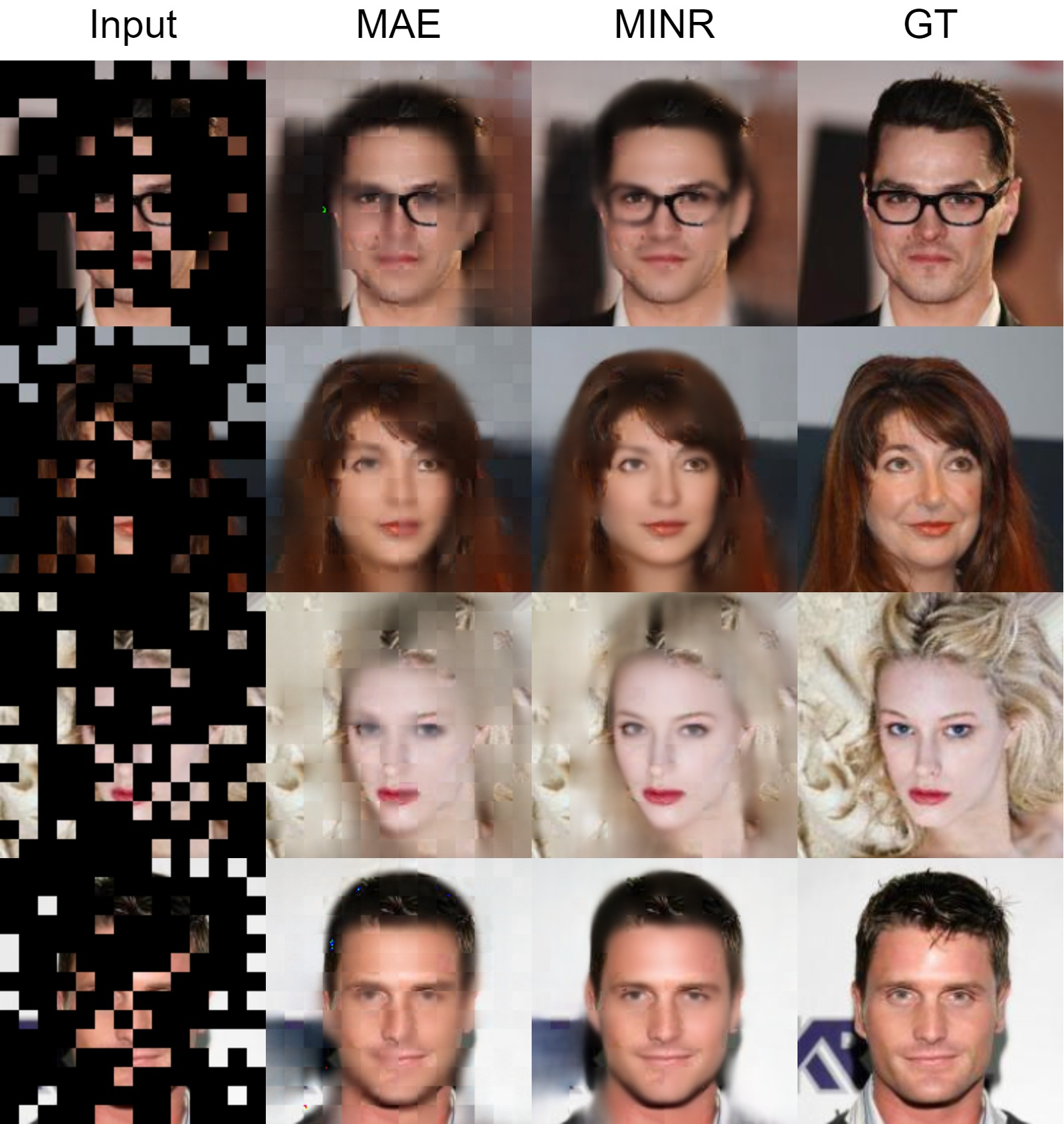

Masked autoencoder (MAE) has been often highlighted for its

versatility and success in various tasks. However, a notable

limitation of MAE is its dependency on masking strategies, such as

mask size and area, as evidenced by several studies. The MAE not only

utilizes adjacent patches but also employs explicit information from

all visible patches to fill each masked patch.

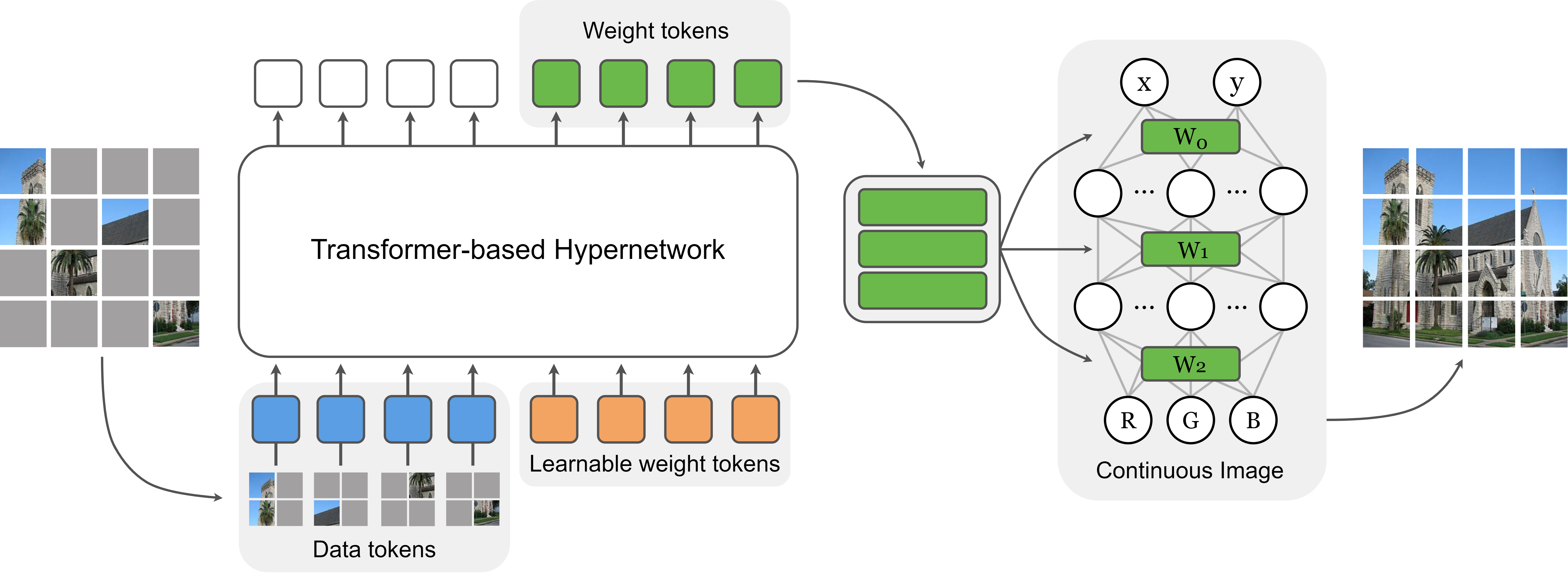

We introduce the masked implicit neural representations (MINR)

framework that combines

implicit neural representations (INRs) with masked image

modelling to address the limitations of MAE. The advantages of MINR

include: i) Leveraging INRs to learn a continuous function less

affected by variations of information in visible patches, resulting in

performance improvements in both in-domain and out-of-distribution

settings; ii) Considerably reduced parameters, alleviating the

reliance on heavy pretrained model dependencies; and iii) Learning

continuous function rather than discrete representations, which

provides greater flexibility in creating embeddings for various

downstream tasks.